MtxVec v6

Multicore math engine for science and engineering

Overview

MtxVec is an object oriented vectorized math library, the core of Dew Lab Studio, featuring a comprehensive set of mathematical and statistical functions executing at impressive speeds (check the testimonies of some of our many customers ).

MtxVec math library is available for Delphi/C++ Builder and Visual Studio .NET environments.

Common Product Features

-

A comprehensive set of mathematical, signal processing and statistical functions

-

Substantial performance improvements of floating point math by exploiting the Intel AVX, AVX2 and AVX-512 instruction sets offered by modern CPUs.

-

Solutions based on it scale linearly with core count which makes it ideal for massively parallel systems.

-

Improved compactness and readability of code.

-

Use MtxVec prior knowledge to shorten the migration of your existing code to MtxVec patterns with AI.

-

Support for native 64bit execution gives free way to memory hungry applications

-

Significantly shorter development times by protecting the developer from a wide range of possible errors.

-

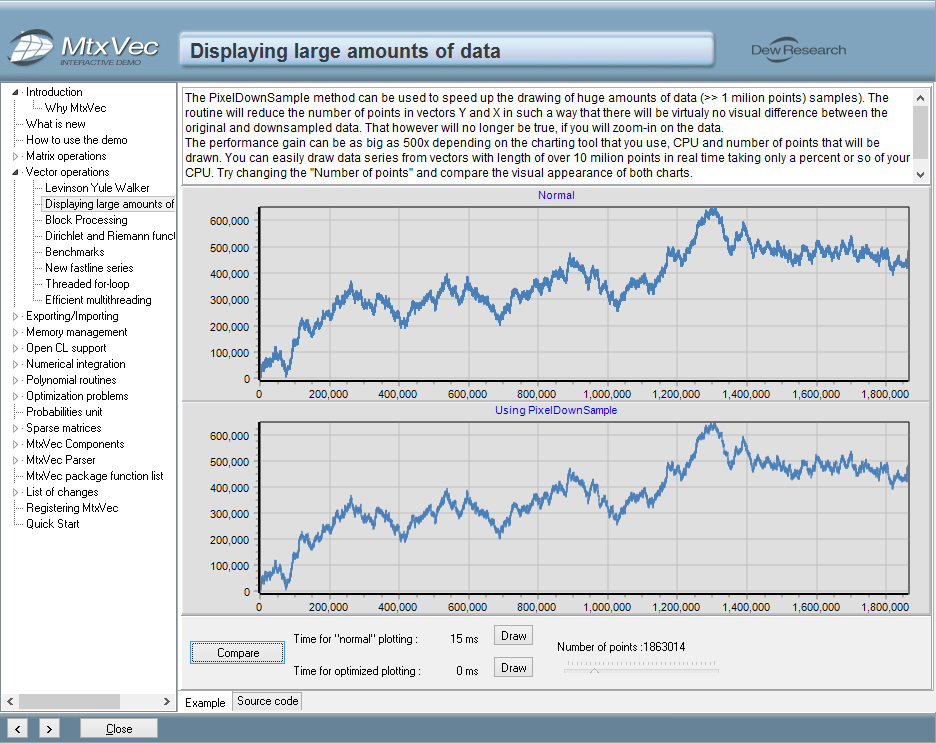

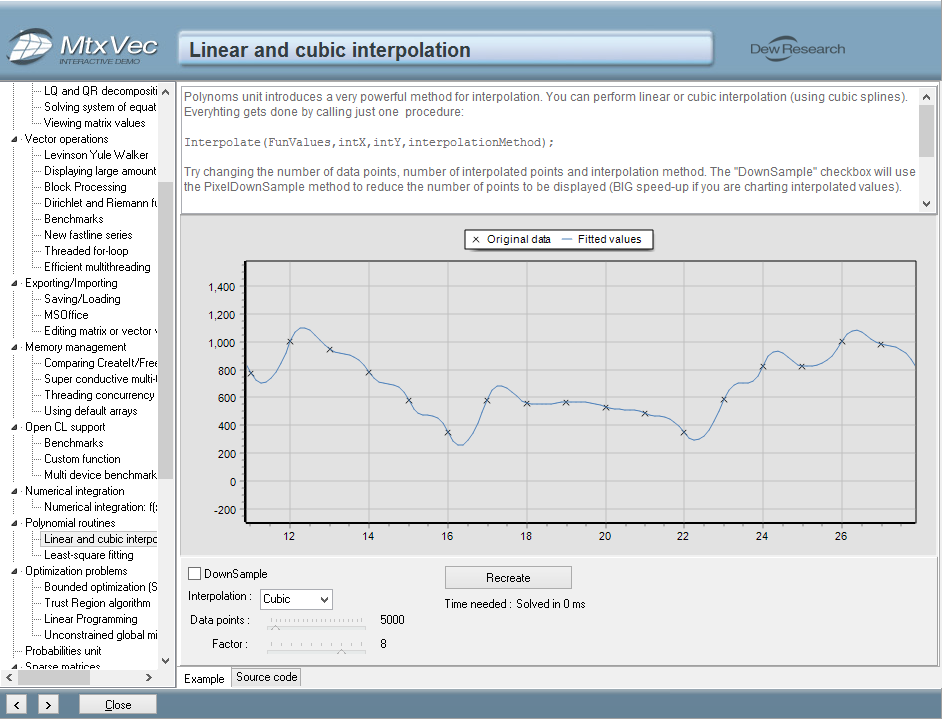

Direct integration with TeeChart© to simplify and speed up the charting.

-

No royalty fees for distribution of compiled binaries in products you develop

-

Floating point precision selectable at run-time.

Having converted our models from Matlab, a speed increase of over 10x has been achieved . This core acceleration finally allows these models to be used in real-time automation control.

Optimized Functions

Performance Secrets

Code vectorization

The secret behind our performance is called "code vectorization". We program achieves substantial performance improvements in floating point arithmetic by exploiting the CPU Streaming SIMD Extensions instruction sets. (SIMD = Single Instruction Multiple Data.)

Super conductive memory management

Other Features

-

Linear Algebra Package

MtxVec makes extensive use of the Linear Algebra Package, which today is the de-facto standard for linear algebra and is free from www.netlib.org. Because this package is standard, different CPU makers provide performance-optimized versions to achieve maximum performance. Linear algebra routines are the bottleneck of many frequently used algorithms; therefore, this package is the part of the code that makes the most sense to optimize. MtxVec uses the version optimized for individual CPUs provided by Intel with their Math Kernel library.

-

Intel Performance Primitives

Our library also makes extensive use of Intel Performance Primitives, which accelerate mainly the non-linear algebra based functions. The Intel Performance Primitives run on all Intel x86-compatible CPUs, old and new, but will achieve highest performance on the Intel Core architecture.

What You Gain With MtxVec

-

Low-level math functions are wrapped in simple-to-use code primitives.

-

Write Vector/Matrix expressions with the standard set of *,/,+- operators

-

Best in class performance when evaluating Vector/Matrix expressions.

-

All primitives have internal and automatic memory management. This frees you from a wide range of possible errors like: allocating insufficient memory, forgetting to free the memory, keeping too much memory allocated at the same time, and similar errors. Parameters are explicitly range checked before they are passed to the dll routines. This ensures that all internal dll calls are safe to use.

-

When calling linear algebra routines, the software automatically compensates for the fact that in FORTRAN the matrices are stored by columns but in other languages are stored by rows.

-

Many linear algebra functions take several parameters. The software automatically fills in most of the parameters, thus reducing the time to study each function extensively before you can use it.

-

The software is organized into a set of "primitive" highly optimized functions covering all the basic math operations. All higher-level algorithms use these basic operations, in a way similar to how LAPACK uses the Basic Linear Algebra Subprograms (BLAS).

-

Although some compilers support native SSE4/AVX2/AVX512 instruction sets, the resulting code can never be as optimal as a hand-optimized version.

-

Many linear algebra routines are multithreaded, including FFT's and sparse matrix solvers.

-

All functions must pass very strict automated tests. These tests give the library the highest possible level of reliability, accuracy and error protection.

-

When you write numerical algorithms, you will find the compactness and readability of code improves noticeably and you achieve significantly shorter development times.

-

High performance expression parser/scripter that can work with vector and matrix variables.

-

Debugger Visualizer for faster inspection of variable contents during debugging.