MtxVec v6

With Delphi, C# or C++ quickly deliver 10x faster code with AI conversion to MtxVec

Comprehensive and fast numerical math library

Support for VS.NET, Embarcadero Delphi and C++ Builder

Statistical and DSP add-ons

Latest News

Dew Lab Studio 2026 for Delphi and C++Builder

Written on

We are happy to announce the release of Dew Lab Studio 2026 for Delphi and C++Builder. This release is special, because it can easily qualify as the biggest feature improvement in the last decade and yes this was facilitated by the rise in the AI tool capabilities. It is the first time perhaps in history, that Delphi now has a IEEE 754-2008 compliant scalar math library for Win32 and Win64, which is not only as accurate as the silicon allows, but is also faster than the best C/C++ runtime libraries available today. It is not by much (+20%), but this also means, that we have a solid match at least. Comparatively, the functions like, sin, cos, exp, ln, are about 2x faster than latest version of .NET runtime and roughly 7x faster than those shipped with Delphi. The vectorized versions still maintain an advantage (5x), but the wolrd truly changes for all those algorithms that were also running in Delphi but could not be vectorized. The combined side effects are hard to over-estimate:

- MtxVec Expression parser, which was already very fast for formula evaluator and scripting engine gets an additional boost.

- MtxVec Core Math library, which runs without dependancy on external dlls profits the most with all key features being 2-3x faster.

- Both add-on products for MtxVec, the signal processing library (DSP Master) and the statistical library (Stats Master) benefit proportionally.

- Delphi developers working in sicence in engineering finally got a scalar math library that achieves parity and in some cases exceeds the numerical accuracy thus far available only to C/C++/Python/Matlab/Mathemathica and the likes.

Numerical library for Delphi and .NET developers

Dew Research develops mathematical software for advanced scientific computing trusted by many customers. MtxVec for Delphi, C++ Builder or .NET is alternative for products like Matlab, LabView, OMatrix, SciLab, etc. We offer a high performance math library, statistics library and digital signal processing library (dsp library) for:

- Embarcadero/CodeGear Delphi and C++Builder numerical libraries and components

- Microsoft .NET components -- including Visual Studio add-ons and a .NET numerical library for C++, C#, and Visual Basic

Product Features

-

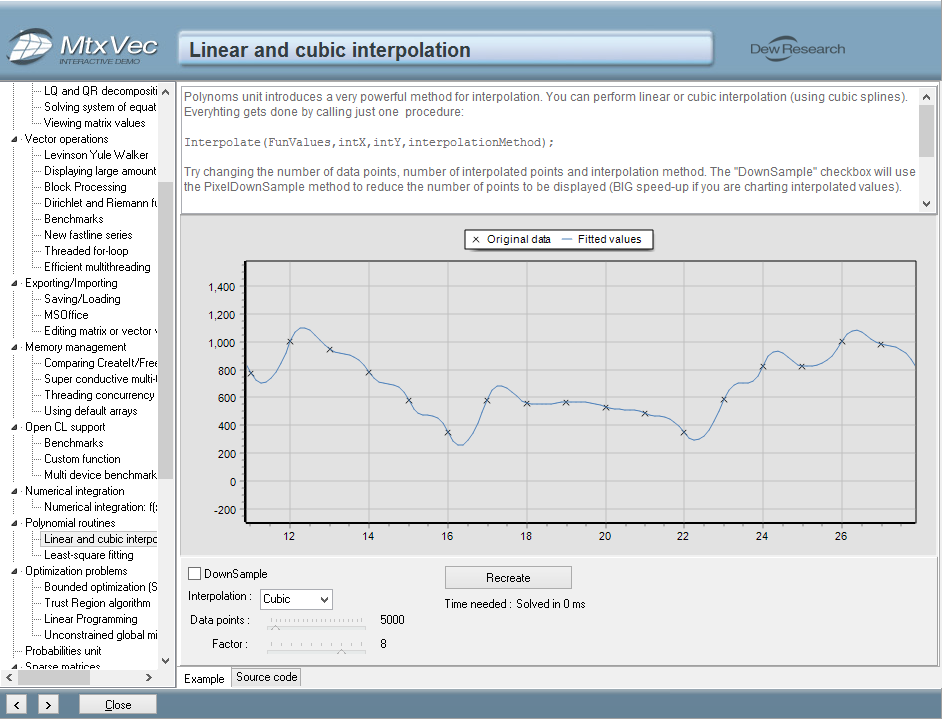

A comprehensive set of mathematical, signal processing and statistical functions

-

Substantial performance improvements of floating point math by exploiting the SSE4.2, AVX, AVX2, AVX512 instruction sets offered by modern CPUs.

-

Solutions based on it scale linearly with core count which makes it ideal for massively parallel systems.

-

Improved compactness and readability of code.

-

Support for native 64bit execution gives free way to memory hungry applications

-

Significantly shorter development times by protecting the developer from a wide range of possible errors.

-

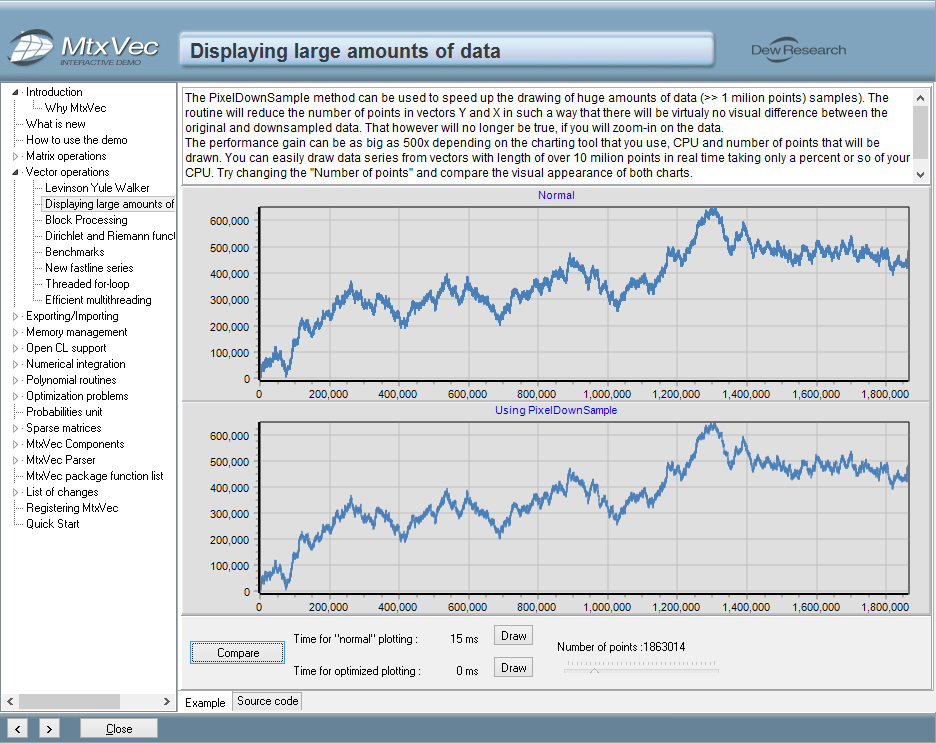

Direct integration with TeeChart© to simplify and speed up the charting.

-

No royalty fees for distribution of compiled binaries in products you develop

Optimized Functions

Performance Secrets

Code vectorization

The program achieves substantial performance improvements in floating point arithmetic by exploiting the CPU Streaming SIMD Extensions: SSE4.2, AVX, AVX2 and AVX512 instruction sets. (SIMD = Single Instruction Multiple Data.)